GP Acceleration

Surrogate energy surfaces via Gaussian process regression for 10x fewer force evaluations

Context

A Gaussian process (GP) regression builds a surrogate energy surface on the fly from the force evaluations it has already computed. The GP predicts energies and forces at unseen configurations and flags where its own uncertainty is highest, so the saddle search spends its next electronic structure calculation where it matters most. Force evaluation counts drop by roughly an order of magnitude relative to the standard dimer method (Goswami et al. 2025).

The implementation lives in the eOn saddle point search code, runs in plain Cartesian coordinates, and drops into any electronic structure code via eOn’s RPC potential interface.

Adaptive pruning

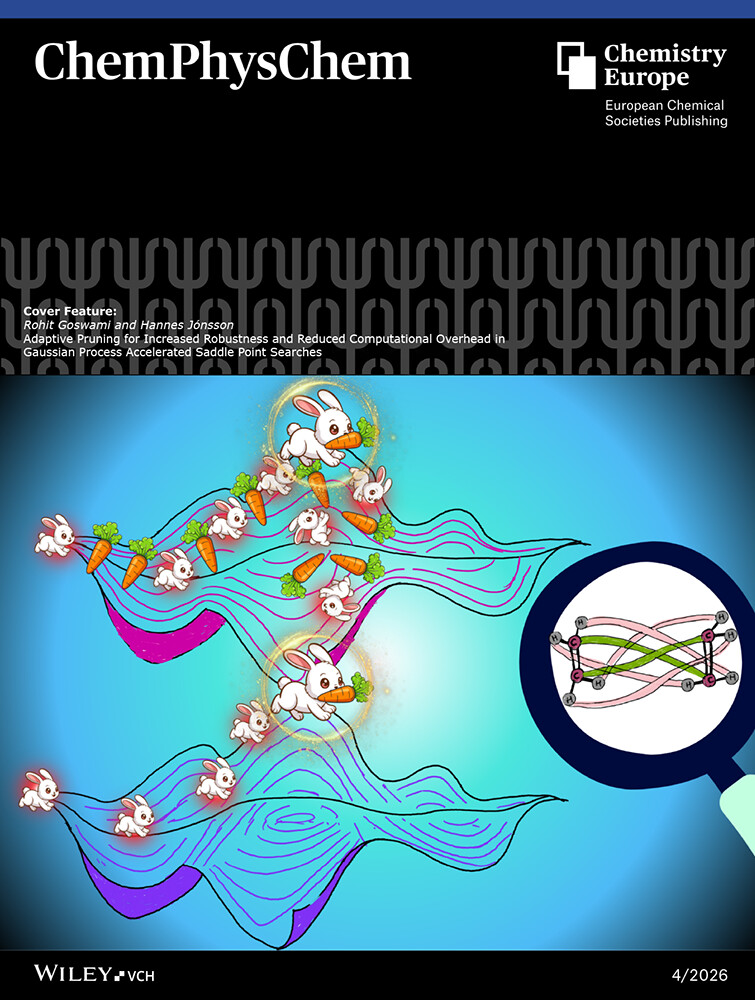

A GP’s cost to update grows cubically with the dataset size. On long runs the surrogate becomes the bottleneck before the DFT does. We prune the training set using farthest-point sampling guided by the Earth Mover’s Distance - a permutation-invariant metric for structural similarity - and halve wall time with no loss of accuracy (Goswami and Jónsson 2025). The work ran as the cover feature in ChemPhysChem 1.

Optimal Transport representation

Chapter 8 of the thesis replaces Cartesian coordinates with the Earth Mover’s Distance (EMD) as the GP’s similarity metric. EMD is permutation-invariant and captures chemically meaningful distances between molecular configurations. Combined with farthest-point sampling for data selection and adaptive trust radii for hyperparameter stability, this yields the Optimal Transport Gaussian Process (OT-GP) framework.

Code

- eOn – Lead maintainer; GP saddle search integration

See also: engineering notes for eOn under eOn in Software.

Open directions

- Scaling the OT-GP framework to adaptive kinetic Monte Carlo for long-timescale simulations: millions of atoms, thousands of KMC steps per day.

- Combining GP surrogates with ML potentials (the GP predicts corrections to the ML potential, not the full DFT surface).

- Automatic hyperparameter selection using the EMD landscape structure.

References

Cover design by Ruhila Goswami. ↩︎